Over the last few months, I have followed a lively debate among my academic colleagues who teach various quantitative methods courses on how to adapt teaching in the era of LLMs. Many are grappling with what these tools mean for how we teach, how we assess, and whether the skills we've emphasized still matter. Several have developed impressive and creative approaches to incorporating LLMs into their teaching. At the same time, colleagues at Samvid Inc (where I serve as an advisor) have been developing tools that professors can use to enhance pedagogy, which has forced me to think about these questions practically, not just in the abstract.

While we wrestle with this question, it’s worth remembering that this is not the first time we’ve faced this problem. A couple of decades back, the emergence of tools like Excel posed the same question. So before trying to answer how teaching should evolve now, I wanted to step back and think about what role teaching plays in the overall ecosystem of “solving a business problem.”

What Does Solving a Business Problem Actually Look Like?

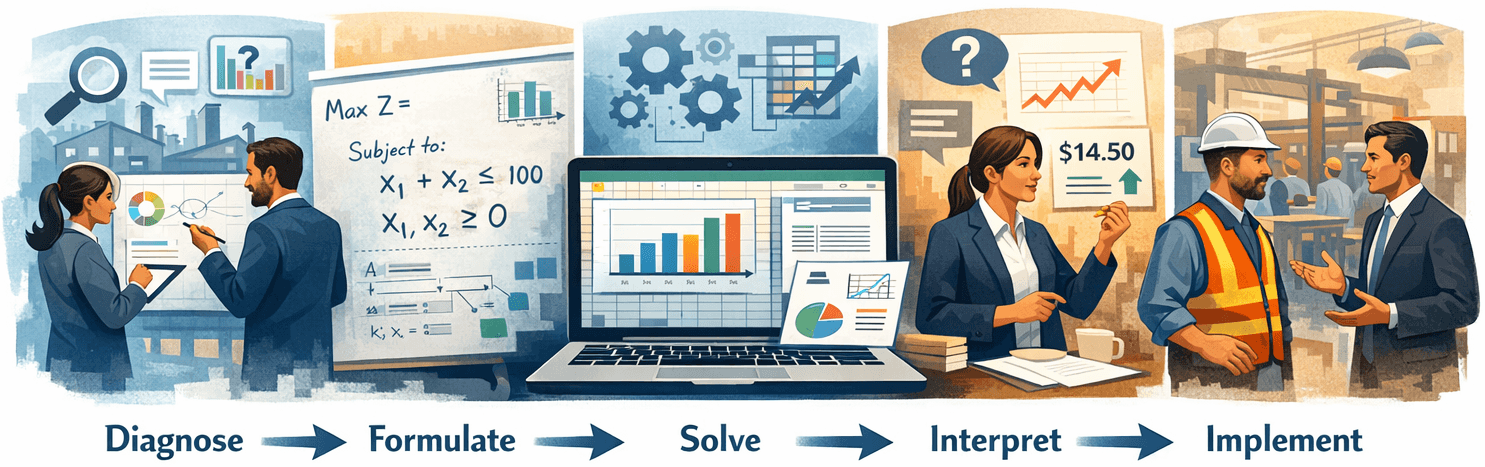

When I think about what an analytically trained professional does---whether they’re optimizing a supply chain, forecasting demand, or figuring out root causes of waiting time in a clinic---a simplified version of the workflow looks something like this:

Diagnose → Formulate → Solve → Interpret → Implement

Diagnose is where it all starts. You walk into a messy situation. Nobody tells you “this is a regression problem” or “you need to solve an LP.” You talk to people, obtain and review the data, observe symptoms, and then try to figure out what type of problem you’re looking at. Is this a capacity issue? A forecasting issue? A process flow issue? The problem is never cleanly stated, and figuring out the problem is the problem.

Formulate is the translation step where you set up the problem in a formal way so that tools can be applied to it. This involves defining variables, writing the objective function, specifying constraints, choosing a model, labeling the data. This is where “business context” is translated into a “math problem.”

Solve is the part where appropriate tools are applied to arrive at the solution. This may involve running a regression, solving an LP, finding the bottleneck or critical path using appropriate formulae and procedures.

Interpret is where we make sense of the output. Does the answer hold up? What does it actually mean for the business? This is where you check whether the model’s assumptions are reasonable, whether the sensitivity analysis reveals fragility, and where you translate “the shadow price on Constraint 2 is $14.50” into “an extra hour of machine time is worth $14.50 to us, so that overtime shift pays for itself.”

Implement is all about making it happen in a real organization. This is where analysis meets reality and reality pushes back. The model says to reallocate production across plants, but the VP of the Midwest facility isn’t going to give up volume without a fight. The optimization says to consolidate suppliers, but your best supplier is also your CEO’s college roommate. Implementation requires persuasion, political awareness, and the ability to adapt your recommendation when someone says “that’ll never work here”, and sometimes they’re right.

Now, that we are familiar with these steps these steps, the question I want to explore is: where does teaching focus within this chain, and why?

Let’s Go Back to the Pre-Excel World

Think about how quantitative methods were taught in the 1970s and 80s. If you wanted students to do a regression, they calculated coefficients by hand. Linear programming meant doing simplex iterations on paper. I still remember (and I am aging myself here) pivoting through simplex tableaus by hand, tracking basic and non-basic variables across iterations.

What was the teaching focused on? Almost entirely on Solve. The textbook gave you a well-defined problem: “Given this objective function and these constraints, find the optimal solution.” Nobody asked where the formulation came from. Nobody tested whether the student could look at a business situation and set up the problem. The problem arrived pre-formulated. Your job was to execute. And this made sense! Computation was the bottleneck. It was genuinely hard and time-consuming. If you could execute the math correctly, you were considered analytically competent. Teaching focused on the constraint.

What Changed Post-Excel

Let’s now think about what happened to teaching when Excel (and other similar software) made computation trivially easy. Where did the focus shift?

I would argue that the focus broadened away from Solve in both directions. Upstream, teaching evolved to emphasize Formulate. Downstream, it grew to include Interpret. The hallmark of modern quantitative teaching is the contextual problem: “Here’s a paragraph about a factory. Identify the decision variables. Write the constraints. Set up the model in Excel.” The bottleneck shifted from cranking the math to translating a business description into a formal structure. And just submitting a numerical solution is no longer enough -- we ask students for comprehensive reports with sensitivity analysis, charts, and answers to questions like “what does this p-value mean?”

If you picture the five-step chain, the zone of educational focus expanded from a point in the middle to a broader band of Formulate through Interpret, with Solve being outsourced to Excel. But notice what was still left out. Diagnose was handled by the case or the problem description. Someone told you this was a regression problem. Implement was considered outside the scope of analytical training, a “soft skill” for another course. Education had expanded, but it hadn’t reached the edges.

Now LLMs Are Shifting the Bottleneck ... Again

So here we are. Let me describe what LLMs actually do well, because I think the debate sometimes conflates different capabilities. Give an LLM a business description ---“We have a factory that makes two products, here are the profit margins, here are the machine hour constraints”---and it will formulate the LP. Define your data and it will run the regression, interpret the coefficients, flag potential issues like multicollinearity, and write up the findings. It handles Formulate, Solve, and Interpret with remarkable competence.

This is exactly why my colleagues are worried. The entire zone that education expanded into during the Excel era---from Formulate through Interpret---is being automated. But here’s the thing. Rather than seeing this as a crisis, I think it helps to apply a principle we teach in our own operations classes. Goldratt’s Theory of Constraints tells us: find the bottleneck, focus on it, and when it moves, follow it. We’ve been teaching students to do this with factory floors and supply chains for decades. Maybe it’s time we applied it to our own profession.

The bottleneck has shifted again. Where has it gone? If I look at the five-step chain and ask “what can LLMs NOT do?”, the answer becomes clear pretty quickly: LLMs cannot Diagnose and they certainly cannot Implement. Diagnosis requires judgment under ambiguity, domain intuition, and interactive information-gathering where the quality of your questions determines the quality of information you get. Implementation requires persuasion, political navigation, and the ability to adapt when a real organization pushes back on your elegant optimal solution. Both are messy, contextual, and deeply human -- which is exactly why they’ve resisted automation through every technology wave so far.

So Where Does This Leave Us?

The framework I proposed suggests a few directions:

First, the cheating problem is a symptom, not the disease. When we worry about students using ChatGPT on homework and exams, we’re worrying about the wrong thing. The deeper issue is that we’re still testing skills in the Formulate-Solve zone---“here’s a description, set up and solve the model”---which is exactly what is being commoditized. Even if students weren’t cheating, we’d need to rethink what we’re testing.

Second, we should be focusing our energy on teaching and testing diagnosis and implementation. These are the skills at the new bottleneck, and they’ve been largely absent from quantitative curricula, not because they don’t matter, but because there was no scalable way to assess them. That’s changing. LLMs now make it possible to build diagnostic assessments that simply didn’t exist five years ago: An AI persona representing a manager who responds dynamically to student questions, reveals data only when asked the right things, and presents realistic ambiguity. But diagnosis doesn’t always require a persona. In a recent class where I teach students to build and test operational hypotheses, I gave them actual financial statements from a real company and asked them to construct a ROIC tree to test their ideas for improving company's profitability -- no problem statement, no hints about what to look for. LLMs were actually helpful here, not as a shortcut but as a tool for stitching information together so students could validate their emerging ideas. The same technology that commoditized formulation now enables assessment of the skill that replaces it. Building these assessments is harder than it sounds, and it's one of the problems folks at Samvid are working hard to solve.

Third, the middle still matters, but its purpose changes. I’m not saying we should stop teaching regression or optimization. Students still need to understand the basic ideas well -- in fact, even as I work on developing apps that test diagnostic skill, I’m increasingly moving toward in-person exams to ensure better conceptual understanding. But the purpose of teaching the middle shifts: from “so you can do it” to “so you can diagnose when it’s the right tool and catch the machine when it gets it wrong.”

I'm an Associate Professor of Operations and Decision Analytics at Baruch College, Zicklin School of Business (CUNY). I also advise Samvid Inc. This piece grew out of real conversations with colleagues, many of whom are doing innovative work adapting their courses to the LLM era. I'd love to hear how others are thinking about where teaching should go next.